Easy to Use: If you are already familiar with standard Python scripts, you know how to use Apache Airflow.Also, check out How to Generate Airflow Dynamic DAGs: Ultimate How-to Guide 101. It’s a usual affair to see DAGs structured like the one shown below: Image Source: Apache Airflowįor more information on writing Airflow DAGs and methods to test them, do give a read here- A Comprehensive Guide for Testing Airflow DAGs 101. Oftentimes in the real world, tasks are not reliant on two or three dependencies, and they are more profoundly interconnected with each other. These individual elements contained in your workflow process are called “ Tasks”, which are arranged on the basis of their relationships and dependencies with other tasks. Think of it as a series of tasks put together with one getting executed on the successful execution of its preceding task. We’ll help clear everything for you.ĭirected Acyclic Graph or DAG is a representation of your workflow. If you are new to Apache Airflow and its workflow management space, worry not. What is Airflow DAG? Image Source: AirflowĭAG is a geekspeak in Airflow communities. Since 2016, when Airflow joined Apache’s Incubator Project, more than 200 companies have benefitted from Airflow, which includes names like Airbnb, Yahoo, PayPal, Intel, Stripe, and many more. It was written in Python and still uses Python scripts to manage workflow orchestration. The current so-called Apache Airflow is a revamp of the original project “Airflow” which started in 2014 to manage Airbnb’s complex workflows. Track the state of jobs and recover from failure.Safeguard jobs placement based on dependencies.Comprising a systemic workflow engine, Apache Airflow can: It is used to programmatically author, schedule, and monitor your existing tasks. Not only do they coordinate your actions, but also the way you manage them.Īpache Airflow is one such Open-Source Workflow Management tool to improve the way you work. Workflow Management Tools help you solve those concerns by organizing your workflows, campaigns, projects, and tasks.

It’s essential to keep track of activities and not get haywire in the sea of multiple tasks. When working with large teams or big projects, you would have recognized the importance of Workflow Management.

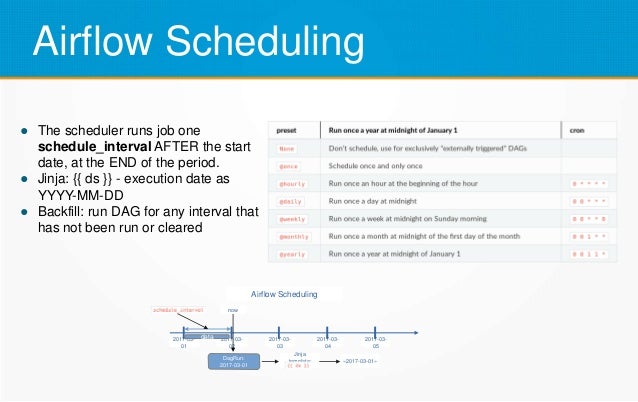

What is Airflow? Image Source: Apache Software Foundation Here’s a rundown of what we’ll cover: Table of Contents We’ll also provide a brief overview of other concepts like using multiple Airflow Schedulers and methods to optimize them. We’ll clarify the lingo and terminology used when creating and working with Airflow Scheduler. In this guide, we’ll share the fundamentals of Apache Airflow and Airflow Scheduler. Integrate with Amazon Web Services (AWS) and Google Cloud Platform (GCP).Create and handle complex task relationships.It is a robust solution and head and shoulders above the age-old cron jobs. Apache Airflow is Python-based, and it gives you the complete flexibility to define and execute your own workflows. It can read your DAGs, schedule the enclosed tasks, monitor task execution, and then trigger downstream tasks once their dependencies are met. Airflow Scheduler: Optimizing Scheduler PerformanceĪirflow Scheduler is a fantastic utility to execute your tasks.

Airflow 2.0: Running Multiple Schedulers.Airflow Scheduler: Triggers in Scheduling.Airflow Scheduler Parameters for DAG Runs.Airflow Scheduler: Scheduling Concepts and Terminology.Simplify your ETL using Hevo’s No-Code Data Pipeline.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed